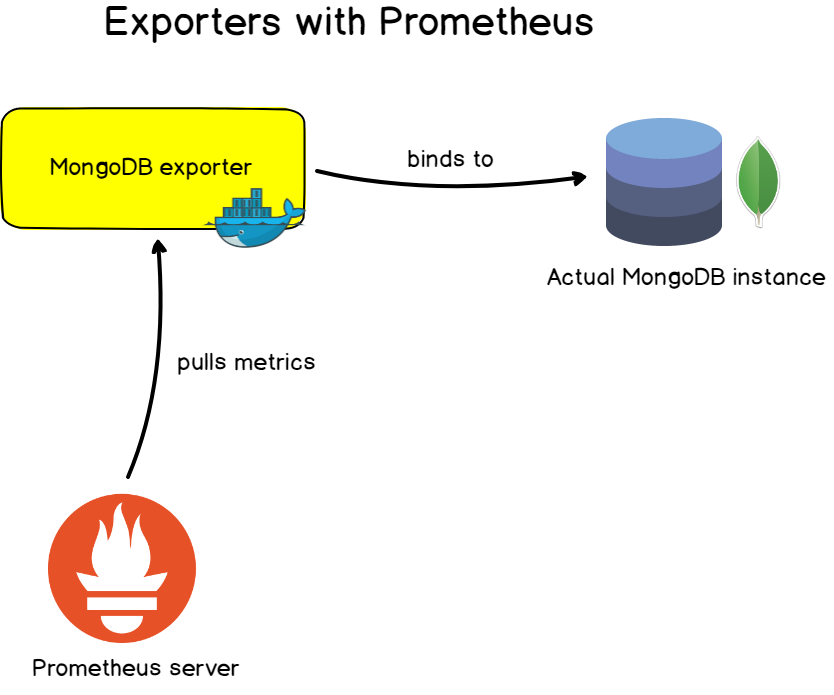

Have questions on time and Prometheus? Contact us. If you were comparing logs across machines where at least one of them had time sync issues, it could help you figure out the true ordering of events. Source code for these dashboards can be found in this GitHub repository. This could also be used to see how much clock drift there was historically. The following default dashboards are automatically provisioned and configured by Azure Monitor managed service for Prometheus when you link your Azure Monitor workspace to an Azure Managed Grafana instance. 304 problems from too much cardinality, 359 produced by Blackbox probes. It won't be accurate enough to spot being 10ms off, but 1s should be perfectly doable without having to dig into more the intricate timex and NTP metrics that the node exporter can provide. usage by Prometheus and Node Exporter, 30 metadata labels for Docker containers. So as long as the time between when the scrape starts and the time module is collected is low (which it usually will be) then timestamp(node_time_seconds) - node_time_seconds will allow you to spot significant clock drift reasonably easily. For samples from a scrape, this is when Prometheus begins the scrape. There is however the timestamp() function, which will give the timestamp of a sample. Nor can you really compare time() to node_time_seconds to detect clock drift, as the difference is going to be as much as the scrape interval - and by the time you have 15-60s of clock drift it's probably already causing quite a few problems. On its own this may not seem of much use, as Prometheus already has the time() function to provide the evaluation time. On data source on Grafana, put this on the URL and try to save and test.The time module is even simpler than conntrack, exposing only a single metric: # HELP node_time_seconds System time in seconds since epoch (1970). Setup Node Exporter Binary Step 1: Download the latest node exporter package. Port 9100 opened in server firewall as Prometheus reads metrics on this port. Maybe the exposing services still working but prometheus seems like not able to scrape it. If you would like to setup Prometheus, please see the Prometheus setup guide for Linux. I also tried to wget one of the node exporter endpoints inside the container but got this wget: can’t connect to remote host (): No route to host. When you run the command it will display the IP address(172.17.0.3) of the Prometheus container which needs to be mapped with the port of the prometheus server on Grafana. Before You Begin Prometheus Node Exporter needs Prometheus server to be up and running. For our example, we require a dashboard that is built to display Linux Node metrics using Prometheus and nodeexporter, so we chose Linux Hosts Metrics Base. I used Prometheus and node exporter a while ago and had access to nodefilesystem metrics to monitor disk usage but I've recently fired it up on some other servers (Ubuntu Linux) and those metrics seem to be missing. Note : (faca0c893603 is the ContainerID of the prom/prometheus server)

Please copy the ID and run the command below on your terminal to see the IP address of your Prometheus server: docker inspect -f '' faca0c893603 You will see your prometheus server container ID displayed for example "faca0c893603". Use this command on your terminal to display all the container IDs: docker ps -a To connect the prometheus to GRAFANA, you will need to get the prometheus server IP address that is running as a docker image from host. This will work as long as you have both your Grafana and Prometheus running as a docker images so before you begin please run the command below to be sure that both prom and Grafana images are up docker ps Please see the guide below to fix the issue!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed